This is a paper originally submitted to Swinburne University as part of a postgraduate project.

An Anthropic Universe?

Imagine a puddle waking up one morning and thinking, “This is an interesting world I find myself in, an interesting hole I find myself in, fits me rather neatly, doesn't it? In fact it fits me staggeringly well, must have been made to have me in it!” (Douglas Adams, 1998)

Is there a deep and fundamental relationship between the existence of the laws of physics and the fact that there exist beings with the capacity to understand those laws? The idea that our existence suggests something about the fundamental structure of reality is in stark opposition to the otherwise increasingly general trend over the years by scientists since the Copernican Revolution to move humanity from the centre of the universe to little more than a footnote in the great corpus of fundamental discoveries in physics and cosmology. This idea is typified by Stephen Hawking’s now famous quip that “The human race is just a chemical scum on a moderate-sized planet, orbiting around a very average star in the outer suburb of one among a hundred billion galaxies. We are so insignificant that I can't believe the whole universe exists for our benefit” (Hawking, 1995). But what if the laws of physics have an especially biofriendly form? Would this suggest that rather than being just any old ‘chemical scum’ that in fact the existence of thoughtful observers like ourselves is somehow written into the laws of physics? This is the question of “fine-tuning”. How “like ourselves” must observers be and so how peculiar must the laws of physics be to permit life and how narrow a tolerance does life have for changes in the fundamental parameters? If the universe is fine-tuned for life then the anthropic principle seeks to give an account of why this is so.

The anthropic principle, in its most general form, is the claim that the laws of physics are consistent with the existence of observers. But what counts as an observer? The word “anthropic” has its origins in the Greek (from anthrōpikós) meaning human…indeed the writer of the project question hints that it is us as observers that makes a principle anthropic. However when the principle is discussed in scientific or philosophical contexts it is clearly not restricted to the existence of humans. As Nick Bostrom writes, “The term “anthropic” is a misnomer. Reasoning about observation selection effects has nothing in particular to do with homo sapiens, but rather with observers in general.” (Bostrom, 2002). However, Bostrom’s more general observers are, at least implicitly, restricted to being carbon-based. We will see there is some significance to this “carbon-based” requirement as much is made of the “fine tuning” of the laws of physics for carbon production – and hence the possibility of organic chemistry. If we do not begin at the outset by at least constraining our observers to being carbon-based then there is little sense in speculating about fine-tuning. After all, a universe observed by magical beings will be consistent with by any magical set of laws of physics one cares to make up – rendering the entire concept of “fine-tuning” meaningless.

What is the Anthropic Principle?

The Anthropic Principle is an umbrella term for a number of different ideas about the relationship between the laws of physics and the type of universe they describe. As stated, this might be as broad as considering how the laws of physics are consistent with the existence of life through to how the laws of physics might mandate information processing which has ultimate cosmological significance.

Let us turn therefore to the question of distinguishing between the various flavors of The Anthropic Principle. It was Brandon Carter who, in 1973, first used the term “Anthropic Principle”. His version stated:

“The universe (and hence the fundamental parameters on which it depends) must be such as to admit the creation of observers within it at some stage.” (Carter, 1973).

Today, this formulate would be considered a version of the Strong Anthropic Principle (SAP) because of the key feature that the laws of physics must have a certain form. Although Barrow and Tipler (1986) discuss three different versions of the SAP alone, for brevity I restrict myself to Carter’s formulation. The principle so expressed is teleological - suggesting that there are ‘ends’ or a ‘purpose’- and in this case there is a purpose to the laws of physics. The principle does more than just describe how things are – it also predicts how things must be. If discussions about the Anthropic Principle were always about the SAP then the majority might be sidelined as merely speculative metaphysics.

Bostrom (2002) states there are “about thirty” versions of the Anthropic Principle available in the literature. Indeed Carter himself who invented the term writes “the term ‘anthropic principle’ has become so popular that it has been borrowed to describe ideas (e.g. that the universe was teleologically designed for our kind of life, which is what I would call a “finality principle”) that are quite different from, and even contradictory with, what I intended”. (Carter, 2004). It would thus become an exercise in the sociology of science to discuss the usefulness of the term “anthropic principle” given how broad a church of philosophies these two words now describe and so I will follow convention and restrict this project to a description of the two other most frequently used flavours of the anthropic principle: The Weak Anthropic Principle and the Final Anthropic Principle.

The Weak Anthropic Principle (WAP): This is the (seemingly) least controversial version of the principle however, as with the Anthropic Principle more broadly, there are various ways of formulating the WAP. Barrow and Tipler (1986) phrase it in the following way:

“The observed values of all physical and cosmological quantities are not equally probably but take on values restricted by the requirement that there exist sites where carbon-based life can evolve and by the requirement that the universe be old enough for it to have already done so.”

This states that the laws of physics must have a form that is consistent with the existence of “carbon-based life”. The WAP is merely a descriptive statement which may be argued is little more than a tautology that the laws of physics must have a form which is consistent with our observations (and the most important observation that generally follows once the WAP is mentioned is we are carbon-based). The reason this observation is singled out is because of the apparent fine-tuning required to explain the existence of carbon. Whereas the existence of simpler structures – such as a (say) protons – is not balanced on so sharp a knife edge – carbon production seems to be sensitively balanced upon the edge of a knife balanced upon the edge of an even sharper knife. We will see that proponents of the WAP are at pains to demonstrate how so many of the parameters in physics have a very narrow range of possible values that are ‘biofriendly’. As this version of the principle seems merely descriptive, can it be of any use in science? Certainly it is claimed to have been employed in order to help guide the answer to questions in physics that might otherwise seem mysterious.

Consider, as an illustrative example Lineweaver & Egan (2007) where they discuss what is called ‘The Cosmic Coincidence’”– the title sometimes given to the observation in science that the energy densities of matter and the vacuum are the same order of magnitude. Why this should be so when it need not be (it would seem at this stage that there is no deeper law at work from which flows the values of these energy densities) is a mystery unless one essentially argues that the figures could take on a wide range of values however only some values are consistent with the existence of life (actually Lineweaver and Egan argue that the values are what should be expected given observers who arise on terrestrial planets). So here the WAP simply explains away what might otherwise be considered a deep mystery as little more than an observer selection effect: the energy densities have those values because if they did not we would not be here to discuss it. Indeed those values were different in the distant past (and this was long before the possible evolution of complex life on terrestrial planets) and these values are predicted to be quite different in the future (when again, it is unlikely life will be possible when all stars have long since burned out). The idea that the fine-tuning coincidences highlighted by consideration of an anthropic principle are simply a form of bias caused by an observer selection effect is an important consideration to which we turn in the next section. But for completeness, let us mention the last of the flavors of the Anthropic Principle we will discuss – The Final Anthropic Principle (FAP).

The FAP takes the SAP a step further by not only mandating the existence of conscious observers but further predicting that once such observers arise they will never disappear from the universe and eventually gain a full understanding of the laws of physics such that they are able to direct the ultimate evolution of the universe. Most famously promoted by Frank Tipler in “The Physics of Immortality” it is often only a footnote in more sober discussions about the anthropic principle more generally and has been derisively referred to at times as the Completely Ridiculous Anthropic Principle (CRAP).

Observer Selection Effects

As Oxford philosopher Nick Bostrom observes: “(If) you catch one hundred fishes, all of which are greater than six inches (then) does this evidence support the hypothesis that no fish in the pond is much less than six inches long? Not if your net can't catch smaller fish.” This selection effect leads to data that is biased. One can draw no valid conclusions about the size of the smallest fish in the pond. So given the observation we exist, what can we conclude about the laws of physics on the basis of this? Can we expect to conclude the universe is fine-tuned without unreasonably stumbling into an observer selection effect? After all, we can only observe what our net can catch: in this case a universe suitable for us. If there are other universes (an issue we will come back to at the end of this project) then the very theories that predict their existence also (in all but very controversial ways) preclude their observation. This is wrong given Barnes’ simulations.

The observation that the universe is consistent with our existence and that our existence actually rather than merely apparently depends crucially upon the apparent fine-tuning of certain “anthropic coincidences” does not admit of the possibility that there may be many such possible universes equally “biofriendly” to the existence of observers. In what follows a number of illustrative examples of supposed fine tuning will be discussed and it will be important to keep in mind that we simply do not know what other logically possible or actually existing physically possible universes – what other laws of physics – what other combinations of parameters – would permit the existence of observers. Importantly, if any of the theories predicting the existence of many-universes is true then this neatly solves the apparent-fine tuning problem and we find that talk of fine-tuning as being anything more than mere coincidence is invoking an observer selection effect bias. We necessarily find ourselves in one of the many-universes which is not hostile to life (or at least which is life permitting.)

Paul Davies subtitled one of his recent books “Why is the universe just right for life?” (Davies, 2007). It could be argued that this assumes a premise some might not wish to concede. Most of the universe is, from what we can tell – entirely uninhabitable. Indeed most of the Earth is far from “just right for life”. It is child’s play to imagine a universe far more “finely tuned” than the one we find ourselves in. We could imagine all planets to be Earth-like, for example. We do not observe this. We will see that although it is difficult to simulate universes where the parameters (or indeed laws themselves) are different – it is not impossible. And some of these studies seem to suggest that our universe might not be that fine-tuned after all.

Physics, Philosophy and the Anthropic Principle

Interest in the anthropic principle stems in part from the tendency of some to use discoveries in physics to help cache out various metaphysical schemes. It seems that those who write on this topic fall into any one of three broad categories;

The conclusion about fine tuning and the significance of the anthropic principle depends crucially on the metaphysical position (or lack of) that the writer takes.

What is fine-tuned?

We are asked to respond to the question of whether the universe is fine tuned. Fundamentally, this must be interpreted as are the laws and parameters fine tuned? We will see that the most frequently discussed issue with respect to the fine-tuning debate is whether the free parameters have very narrow ranges that would permit life. However, it has been argued that “not just…the ‘constants’ such as masses and force strengths, but the very mathematical form of the laws themselves (could be different)” (Davies, 2007). But how reasonable is this claim? Before moving on to the more substantial issue of ‘constants’ being fine tuned, let us consider whether the form of the laws of physics suggest any kind of ‘fine tuning’.

The mathematician Emmy Noether in 1915 formulated what has now become known as “Noether’s Theorem” which can be stated as “for every differentiable symmetry there exists a conservation law”. This is basically a statement of “point of view invariance” (POVI) which is a constraint imposed by simple considerations of symmetry that the laws of physics not privilege a given observer. POVI allows most of the laws of physics to be derived as “simple consequences of the symmetries of space and time.” (Stenger, 2006) In particular – the conservation laws of energy (no particular position in space or time is to be preferred), linear momentum (no particular velocity in space and time is to be preferred) and angular momentum (no particular direction in space is to be preferred) together follow from POVI. Stenger (op. cit.) shows that POVI can be used to derive almost all of classical and much of modern physics.

At the risk of belaboring the point, the particular form of the laws of physics, for example in classical mechanics we can write a transformation such as:

An Anthropic Universe?

Imagine a puddle waking up one morning and thinking, “This is an interesting world I find myself in, an interesting hole I find myself in, fits me rather neatly, doesn't it? In fact it fits me staggeringly well, must have been made to have me in it!” (Douglas Adams, 1998)

Is there a deep and fundamental relationship between the existence of the laws of physics and the fact that there exist beings with the capacity to understand those laws? The idea that our existence suggests something about the fundamental structure of reality is in stark opposition to the otherwise increasingly general trend over the years by scientists since the Copernican Revolution to move humanity from the centre of the universe to little more than a footnote in the great corpus of fundamental discoveries in physics and cosmology. This idea is typified by Stephen Hawking’s now famous quip that “The human race is just a chemical scum on a moderate-sized planet, orbiting around a very average star in the outer suburb of one among a hundred billion galaxies. We are so insignificant that I can't believe the whole universe exists for our benefit” (Hawking, 1995). But what if the laws of physics have an especially biofriendly form? Would this suggest that rather than being just any old ‘chemical scum’ that in fact the existence of thoughtful observers like ourselves is somehow written into the laws of physics? This is the question of “fine-tuning”. How “like ourselves” must observers be and so how peculiar must the laws of physics be to permit life and how narrow a tolerance does life have for changes in the fundamental parameters? If the universe is fine-tuned for life then the anthropic principle seeks to give an account of why this is so.

The anthropic principle, in its most general form, is the claim that the laws of physics are consistent with the existence of observers. But what counts as an observer? The word “anthropic” has its origins in the Greek (from anthrōpikós) meaning human…indeed the writer of the project question hints that it is us as observers that makes a principle anthropic. However when the principle is discussed in scientific or philosophical contexts it is clearly not restricted to the existence of humans. As Nick Bostrom writes, “The term “anthropic” is a misnomer. Reasoning about observation selection effects has nothing in particular to do with homo sapiens, but rather with observers in general.” (Bostrom, 2002). However, Bostrom’s more general observers are, at least implicitly, restricted to being carbon-based. We will see there is some significance to this “carbon-based” requirement as much is made of the “fine tuning” of the laws of physics for carbon production – and hence the possibility of organic chemistry. If we do not begin at the outset by at least constraining our observers to being carbon-based then there is little sense in speculating about fine-tuning. After all, a universe observed by magical beings will be consistent with by any magical set of laws of physics one cares to make up – rendering the entire concept of “fine-tuning” meaningless.

What is the Anthropic Principle?

The Anthropic Principle is an umbrella term for a number of different ideas about the relationship between the laws of physics and the type of universe they describe. As stated, this might be as broad as considering how the laws of physics are consistent with the existence of life through to how the laws of physics might mandate information processing which has ultimate cosmological significance.

Let us turn therefore to the question of distinguishing between the various flavors of The Anthropic Principle. It was Brandon Carter who, in 1973, first used the term “Anthropic Principle”. His version stated:

“The universe (and hence the fundamental parameters on which it depends) must be such as to admit the creation of observers within it at some stage.” (Carter, 1973).

Today, this formulate would be considered a version of the Strong Anthropic Principle (SAP) because of the key feature that the laws of physics must have a certain form. Although Barrow and Tipler (1986) discuss three different versions of the SAP alone, for brevity I restrict myself to Carter’s formulation. The principle so expressed is teleological - suggesting that there are ‘ends’ or a ‘purpose’- and in this case there is a purpose to the laws of physics. The principle does more than just describe how things are – it also predicts how things must be. If discussions about the Anthropic Principle were always about the SAP then the majority might be sidelined as merely speculative metaphysics.

Bostrom (2002) states there are “about thirty” versions of the Anthropic Principle available in the literature. Indeed Carter himself who invented the term writes “the term ‘anthropic principle’ has become so popular that it has been borrowed to describe ideas (e.g. that the universe was teleologically designed for our kind of life, which is what I would call a “finality principle”) that are quite different from, and even contradictory with, what I intended”. (Carter, 2004). It would thus become an exercise in the sociology of science to discuss the usefulness of the term “anthropic principle” given how broad a church of philosophies these two words now describe and so I will follow convention and restrict this project to a description of the two other most frequently used flavours of the anthropic principle: The Weak Anthropic Principle and the Final Anthropic Principle.

The Weak Anthropic Principle (WAP): This is the (seemingly) least controversial version of the principle however, as with the Anthropic Principle more broadly, there are various ways of formulating the WAP. Barrow and Tipler (1986) phrase it in the following way:

“The observed values of all physical and cosmological quantities are not equally probably but take on values restricted by the requirement that there exist sites where carbon-based life can evolve and by the requirement that the universe be old enough for it to have already done so.”

This states that the laws of physics must have a form that is consistent with the existence of “carbon-based life”. The WAP is merely a descriptive statement which may be argued is little more than a tautology that the laws of physics must have a form which is consistent with our observations (and the most important observation that generally follows once the WAP is mentioned is we are carbon-based). The reason this observation is singled out is because of the apparent fine-tuning required to explain the existence of carbon. Whereas the existence of simpler structures – such as a (say) protons – is not balanced on so sharp a knife edge – carbon production seems to be sensitively balanced upon the edge of a knife balanced upon the edge of an even sharper knife. We will see that proponents of the WAP are at pains to demonstrate how so many of the parameters in physics have a very narrow range of possible values that are ‘biofriendly’. As this version of the principle seems merely descriptive, can it be of any use in science? Certainly it is claimed to have been employed in order to help guide the answer to questions in physics that might otherwise seem mysterious.

Consider, as an illustrative example Lineweaver & Egan (2007) where they discuss what is called ‘The Cosmic Coincidence’”– the title sometimes given to the observation in science that the energy densities of matter and the vacuum are the same order of magnitude. Why this should be so when it need not be (it would seem at this stage that there is no deeper law at work from which flows the values of these energy densities) is a mystery unless one essentially argues that the figures could take on a wide range of values however only some values are consistent with the existence of life (actually Lineweaver and Egan argue that the values are what should be expected given observers who arise on terrestrial planets). So here the WAP simply explains away what might otherwise be considered a deep mystery as little more than an observer selection effect: the energy densities have those values because if they did not we would not be here to discuss it. Indeed those values were different in the distant past (and this was long before the possible evolution of complex life on terrestrial planets) and these values are predicted to be quite different in the future (when again, it is unlikely life will be possible when all stars have long since burned out). The idea that the fine-tuning coincidences highlighted by consideration of an anthropic principle are simply a form of bias caused by an observer selection effect is an important consideration to which we turn in the next section. But for completeness, let us mention the last of the flavors of the Anthropic Principle we will discuss – The Final Anthropic Principle (FAP).

The FAP takes the SAP a step further by not only mandating the existence of conscious observers but further predicting that once such observers arise they will never disappear from the universe and eventually gain a full understanding of the laws of physics such that they are able to direct the ultimate evolution of the universe. Most famously promoted by Frank Tipler in “The Physics of Immortality” it is often only a footnote in more sober discussions about the anthropic principle more generally and has been derisively referred to at times as the Completely Ridiculous Anthropic Principle (CRAP).

Observer Selection Effects

As Oxford philosopher Nick Bostrom observes: “(If) you catch one hundred fishes, all of which are greater than six inches (then) does this evidence support the hypothesis that no fish in the pond is much less than six inches long? Not if your net can't catch smaller fish.” This selection effect leads to data that is biased. One can draw no valid conclusions about the size of the smallest fish in the pond. So given the observation we exist, what can we conclude about the laws of physics on the basis of this? Can we expect to conclude the universe is fine-tuned without unreasonably stumbling into an observer selection effect? After all, we can only observe what our net can catch: in this case a universe suitable for us. If there are other universes (an issue we will come back to at the end of this project) then the very theories that predict their existence also (in all but very controversial ways) preclude their observation. This is wrong given Barnes’ simulations.

The observation that the universe is consistent with our existence and that our existence actually rather than merely apparently depends crucially upon the apparent fine-tuning of certain “anthropic coincidences” does not admit of the possibility that there may be many such possible universes equally “biofriendly” to the existence of observers. In what follows a number of illustrative examples of supposed fine tuning will be discussed and it will be important to keep in mind that we simply do not know what other logically possible or actually existing physically possible universes – what other laws of physics – what other combinations of parameters – would permit the existence of observers. Importantly, if any of the theories predicting the existence of many-universes is true then this neatly solves the apparent-fine tuning problem and we find that talk of fine-tuning as being anything more than mere coincidence is invoking an observer selection effect bias. We necessarily find ourselves in one of the many-universes which is not hostile to life (or at least which is life permitting.)

Paul Davies subtitled one of his recent books “Why is the universe just right for life?” (Davies, 2007). It could be argued that this assumes a premise some might not wish to concede. Most of the universe is, from what we can tell – entirely uninhabitable. Indeed most of the Earth is far from “just right for life”. It is child’s play to imagine a universe far more “finely tuned” than the one we find ourselves in. We could imagine all planets to be Earth-like, for example. We do not observe this. We will see that although it is difficult to simulate universes where the parameters (or indeed laws themselves) are different – it is not impossible. And some of these studies seem to suggest that our universe might not be that fine-tuned after all.

Physics, Philosophy and the Anthropic Principle

Interest in the anthropic principle stems in part from the tendency of some to use discoveries in physics to help cache out various metaphysical schemes. It seems that those who write on this topic fall into any one of three broad categories;

- Those who see the universe, and in particular the laws and parameters of physics as clearly fine-tuned for life – and that something or someone is doing this fine-tuning. Those in this category believe that the anthropic principle clearly points the existence of actual rather than merely apparent design. Peer reviewed literature outside of theological writings which support this view in mainstream physics and philosophy journals is difficult to find but is typified by (for example) physicist Hugh Ross’s book “The Creator and the Cosmos” (Ross, 1998)

- Those who equivocate about the significance of the anthropic principle but believe it to point to something in genuine need of explanation. While those in this category do not see clear evidence of design – nonetheless they see the fine-tuning of the laws and parameters of the universe as more than just apparent. Many physicists fall into this category and are typified by the books and articles of Paul Davies, Brandon Carter, Martin Rees, John Barrow and others – many of which will be discussed in what follows.

- Those who explain anthropic coincidences as being simply that – coincidences. Those in this category explain the apparent fine-tuning of the laws and parameters of physics as being indicative of a misunderstanding or (likely) an incomplete understanding of the laws of physics. Philosophers and physicists such as Victor Stenger and Bostrom subscribe to this.

The conclusion about fine tuning and the significance of the anthropic principle depends crucially on the metaphysical position (or lack of) that the writer takes.

What is fine-tuned?

We are asked to respond to the question of whether the universe is fine tuned. Fundamentally, this must be interpreted as are the laws and parameters fine tuned? We will see that the most frequently discussed issue with respect to the fine-tuning debate is whether the free parameters have very narrow ranges that would permit life. However, it has been argued that “not just…the ‘constants’ such as masses and force strengths, but the very mathematical form of the laws themselves (could be different)” (Davies, 2007). But how reasonable is this claim? Before moving on to the more substantial issue of ‘constants’ being fine tuned, let us consider whether the form of the laws of physics suggest any kind of ‘fine tuning’.

The mathematician Emmy Noether in 1915 formulated what has now become known as “Noether’s Theorem” which can be stated as “for every differentiable symmetry there exists a conservation law”. This is basically a statement of “point of view invariance” (POVI) which is a constraint imposed by simple considerations of symmetry that the laws of physics not privilege a given observer. POVI allows most of the laws of physics to be derived as “simple consequences of the symmetries of space and time.” (Stenger, 2006) In particular – the conservation laws of energy (no particular position in space or time is to be preferred), linear momentum (no particular velocity in space and time is to be preferred) and angular momentum (no particular direction in space is to be preferred) together follow from POVI. Stenger (op. cit.) shows that POVI can be used to derive almost all of classical and much of modern physics.

At the risk of belaboring the point, the particular form of the laws of physics, for example in classical mechanics we can write a transformation such as:

is a simple consequence of POVI. In so far as we might refer to this mathematical formula as a law of physics it seems non-sensical to discuss the possibility that it is fine-tuned. So it is with the rest of the remainder of the laws – so far as their form is concerned. A complication arises when we consider laws that contain parameters that are not predicted by the theory but which must be measured. Perhaps the most straightforward example is that of Newton’s Law of Universal Gravitation:

Once more, following Stenger, the form of this law is a consequence of POVI. This law is an inverse square law rather than (say) an inverse cube law because if it were not then the equivalence principle would be violated (inertial and gravitational masses would be different). Why should we be concerned about whether or not inertial and gravitational mass is the same? This is perhaps where the principle of parsimony earns its keep. Although it is not necessarily the case that inertial and gravitational mass be identical – a universe where they were different would privilege one kind of observer over another. Why should we be concerned about that? Perhaps, in the words of Ludwig Wittgenstein here we have hit bedrock and “our spade is turned”.

But what about the value of G? It seems as though this constant could take on any value at all. Some writers, notably Ross (1998), speculate that the exact value of G is fine tuned. This is incorrect. The particular value that G has depends upon the choice of units in which we choose to express physical quantities (in S.I units, G has the value of 6.67428 ´ 10-11 m3 kg-1 s-2 but in Plank units G has the value of 1). However, to speculate that because we can choose the value of G that we have somehow done away with the question of fine-tuning when it comes to gravity is to miss the point. What we are really concerned with is why gravity has the strength it does. Changing the units in which we choose to express G merely moves the problem around rather than eliminating it. Whatever units we express G in, we are faced with the problem of why masses (in particular why fundamental particles) have the “mass” that they do.

A full understanding of this surely depends on a physical theory which is able to account for mass. Although the large hadron collider is looking, we have not yet found the Higgs Boson. The strength of the interaction of the Higgs Boson (or the Higgs field) with other particles in the standard model may yet predict the value of G making all discussions about the fine-tuning of G (or otherwise) moot. A more fundamental theory still, such as string theory – may predict the relative strengths of all coupling constants such as the fine structure constant.

Were G much larger and so gravity stronger stars would quickly collapse into black holes before any life had a chance to arise on any planet. Were G much smaller and gravity weaker then the universe would have expanded far too quickly for big bang nucleosynthesis to have created any appreciable amount of matter. Given our current state of understanding, the value of G is not predicted by any theory and it seems that we measure this value as being consistent with our existence. And this is the heart of the matter.

It would seem then that if the form of the laws of physics are, in general, a consequence of considerations of point-of-view invariance that there is little to explain. It would require explanation if the laws were such that a certain position, momentum or direction was singled out. It might be argued that all physically possible universes are such that the laws of physics have the form that they do. But it is not merely the form of the laws that dictates the prevalence (or otherwise) of life. The laws contain free parameters like G. To what extent then are the parameters fine-tuned? There are roughly 26 free parameters in the standard model and there are various “anthropic coincidences” (such as the ratio of the mass of the proton to the neutron). Given the abundance of material on this issue and the sheer number of supposed examples of fine-tuning that have been written about, we restrict ourselves here to three illustrative examples: the parameters very generally considered, the Hoyle Resonance and finally the cosmological coincidence.

Fine-tuned parameters

One of the most frequently claimed examples of fine tuning is that involving one or more of the “constants” of nature. Although some authors continue to use the word “constant” – there is reliable evidence suggesting that some of these numbers may not be constant at all (see, for example, Murphy et al 2003) and so we will follow recent convention here and use the word “parameter” to refer to any of the 26 numbers which must be measured and are not predicted by the standard model. We have already discussed the example of G – the constant of proportionality in Newton’s Universal Law of Gravitation. But what is the effect of varying G on the emergence of life in the universe? Much of the material on this question fixates upon the effect of altering the strength of gravity while keeping all else equal. Paul Davies (2007) writes “If gravity were a bit stronger, all stars would be radiative and planets might not form; if gravity were somewhat weaker, all stars would be convective and supernovas might never happen. Either way, the prospects for life would be diminished.” I emphasize what I believe to be the most salient point: what change in other parameters (in this case, say the fine-structure constant and/or the analogous coupling constant for the strong force) would compensate for a slightly different strength of gravity?

The answer to this question can clearly help us to quantify the concept of “fine-tuning” by constraining the amount we can change the parameters – not one at a time but rather simultaneously – and observe the degree to which certain process which life is crucially sensitive to are affected.

One method available to us is computer modeling of fictional universes with parameters which are more or less randomly assigned but where the form of the laws remains the same as our own. Victor Stenger has written a program called “MonkeyGod” where “toy universes” can be created by manipulating the magnitude of the parameters. His program allows the value of the fine-structure constant, the strong interaction constant, the mass of the proton and the mass of the electron to be varied. The program then outputs information about the minimum lifetime of a star, the radius of a planet and the Bohr Radius (among other things). Stenger writes that the purpose of the program is mainly to “demonstrate that long-lived stars, which are probably required for the evolution of life, does not depend on some "’fine tuning’ of the constants of nature but occurs for a wide range of parameters. It also shows that the large number coincidence first proposed by Weyl is not uncommon.” (StengerWeb, 2010). Hermann Weyl was the first in a long line of physicists – including Paul Dirac – who used a philosophy of physics and mathematics more akin to numerology than science – to try to “explain” why some large (or small) significant numbers in the universe take on the values that they do. Of course many of the numbers both of these men were so enamoured by were not dimensionless and so could be set to almost any value depending upon the choice of units one takes.

Stenger summarises the significance of studies which make use of simulations like these in the Philosophy Journal Philo and admits the caveat “While these are really only "toy" universes, the exercise illustrates that many different universes are possible, even within the existing laws of physics” and that MonkeyGod uses only low-energy physics. Stenger completes a study of 100 randomly generated universes with the aim of looking at the lifetime of main sequence stars finding “While a few lifetimes are low, most are probably high enough to allow time for stellar evolution and heavy element nucleosynthesis. Over half the universes have stars that live at least a billion years. Long life may not be the only requirement for life, but it certainly is not an unusual property of universes.” (Stenger, 2000). His conclusion is that based on a simple study where some of the parameters are changed simultaneously there is no reason to suggest that our universe is particularly fine tuned. In the space of all possible universes “long-lived stars are not unusual, and thus most universes should have time for complex systems of some type to evolve.” (op cit.) How then can we say that the parameters we observe are that fine tuned if the elements for organic chemistry are going to be generated over a wide range of possible parameter values?

So perhaps the laws and parameters are not fine-tuned for long lived stars. But what about intelligent life? Lineweaver was not the first and is far from alone when he writes “Human intelligence is not a convergent feature of evolution” (Lineweaver, 2009). It would seem then that there is no evidence that the universe is fine tuned for intelligent life. Given the failure so far to recreate life in the laboratory using Miller-Urey style experiments along with any detection of extra terrestrial life it seems it is still an open question about just how friendly towards life in general the universe might be. Because we cannot draw conclusions based upon evidence we do not yet have, we are constrained to point not to the life we cannot observe but rather to the carbon that we can. We know that carbon is essential for life. Are the laws of physics fine tuned then for carbon production? It is to this question we now turn.

The Hoyle Resonance

One of the more frequently cited examples of fine-tuning is that of the Hoyle-Resonance. This is the apparently fortuitous existence of an excited state of the carbon-12 nucleus which permits the triple alpha reaction to proceed at a sufficient rate to account for the amount of carbon we observe in the universe.

Historically, the production of carbon was problematic because the only feasible scenario for carbon creation - the collision of three helium nuclei – was highly improbable. It was shown in 1949 that for the three body collision to have any chance of overcoming the Coulomb barrier and produce appreciable amounts of carbon a temperature in the order of 108 K was required (Salpeter ,1949). However, the temperature at the core of a star is only of the order 107 K.

Salpeter in 1952 suggested that two helium nuclei fuse first to produce a beryllium nucleus thus:

But what about the value of G? It seems as though this constant could take on any value at all. Some writers, notably Ross (1998), speculate that the exact value of G is fine tuned. This is incorrect. The particular value that G has depends upon the choice of units in which we choose to express physical quantities (in S.I units, G has the value of 6.67428 ´ 10-11 m3 kg-1 s-2 but in Plank units G has the value of 1). However, to speculate that because we can choose the value of G that we have somehow done away with the question of fine-tuning when it comes to gravity is to miss the point. What we are really concerned with is why gravity has the strength it does. Changing the units in which we choose to express G merely moves the problem around rather than eliminating it. Whatever units we express G in, we are faced with the problem of why masses (in particular why fundamental particles) have the “mass” that they do.

A full understanding of this surely depends on a physical theory which is able to account for mass. Although the large hadron collider is looking, we have not yet found the Higgs Boson. The strength of the interaction of the Higgs Boson (or the Higgs field) with other particles in the standard model may yet predict the value of G making all discussions about the fine-tuning of G (or otherwise) moot. A more fundamental theory still, such as string theory – may predict the relative strengths of all coupling constants such as the fine structure constant.

Were G much larger and so gravity stronger stars would quickly collapse into black holes before any life had a chance to arise on any planet. Were G much smaller and gravity weaker then the universe would have expanded far too quickly for big bang nucleosynthesis to have created any appreciable amount of matter. Given our current state of understanding, the value of G is not predicted by any theory and it seems that we measure this value as being consistent with our existence. And this is the heart of the matter.

It would seem then that if the form of the laws of physics are, in general, a consequence of considerations of point-of-view invariance that there is little to explain. It would require explanation if the laws were such that a certain position, momentum or direction was singled out. It might be argued that all physically possible universes are such that the laws of physics have the form that they do. But it is not merely the form of the laws that dictates the prevalence (or otherwise) of life. The laws contain free parameters like G. To what extent then are the parameters fine-tuned? There are roughly 26 free parameters in the standard model and there are various “anthropic coincidences” (such as the ratio of the mass of the proton to the neutron). Given the abundance of material on this issue and the sheer number of supposed examples of fine-tuning that have been written about, we restrict ourselves here to three illustrative examples: the parameters very generally considered, the Hoyle Resonance and finally the cosmological coincidence.

Fine-tuned parameters

One of the most frequently claimed examples of fine tuning is that involving one or more of the “constants” of nature. Although some authors continue to use the word “constant” – there is reliable evidence suggesting that some of these numbers may not be constant at all (see, for example, Murphy et al 2003) and so we will follow recent convention here and use the word “parameter” to refer to any of the 26 numbers which must be measured and are not predicted by the standard model. We have already discussed the example of G – the constant of proportionality in Newton’s Universal Law of Gravitation. But what is the effect of varying G on the emergence of life in the universe? Much of the material on this question fixates upon the effect of altering the strength of gravity while keeping all else equal. Paul Davies (2007) writes “If gravity were a bit stronger, all stars would be radiative and planets might not form; if gravity were somewhat weaker, all stars would be convective and supernovas might never happen. Either way, the prospects for life would be diminished.” I emphasize what I believe to be the most salient point: what change in other parameters (in this case, say the fine-structure constant and/or the analogous coupling constant for the strong force) would compensate for a slightly different strength of gravity?

The answer to this question can clearly help us to quantify the concept of “fine-tuning” by constraining the amount we can change the parameters – not one at a time but rather simultaneously – and observe the degree to which certain process which life is crucially sensitive to are affected.

One method available to us is computer modeling of fictional universes with parameters which are more or less randomly assigned but where the form of the laws remains the same as our own. Victor Stenger has written a program called “MonkeyGod” where “toy universes” can be created by manipulating the magnitude of the parameters. His program allows the value of the fine-structure constant, the strong interaction constant, the mass of the proton and the mass of the electron to be varied. The program then outputs information about the minimum lifetime of a star, the radius of a planet and the Bohr Radius (among other things). Stenger writes that the purpose of the program is mainly to “demonstrate that long-lived stars, which are probably required for the evolution of life, does not depend on some "’fine tuning’ of the constants of nature but occurs for a wide range of parameters. It also shows that the large number coincidence first proposed by Weyl is not uncommon.” (StengerWeb, 2010). Hermann Weyl was the first in a long line of physicists – including Paul Dirac – who used a philosophy of physics and mathematics more akin to numerology than science – to try to “explain” why some large (or small) significant numbers in the universe take on the values that they do. Of course many of the numbers both of these men were so enamoured by were not dimensionless and so could be set to almost any value depending upon the choice of units one takes.

Stenger summarises the significance of studies which make use of simulations like these in the Philosophy Journal Philo and admits the caveat “While these are really only "toy" universes, the exercise illustrates that many different universes are possible, even within the existing laws of physics” and that MonkeyGod uses only low-energy physics. Stenger completes a study of 100 randomly generated universes with the aim of looking at the lifetime of main sequence stars finding “While a few lifetimes are low, most are probably high enough to allow time for stellar evolution and heavy element nucleosynthesis. Over half the universes have stars that live at least a billion years. Long life may not be the only requirement for life, but it certainly is not an unusual property of universes.” (Stenger, 2000). His conclusion is that based on a simple study where some of the parameters are changed simultaneously there is no reason to suggest that our universe is particularly fine tuned. In the space of all possible universes “long-lived stars are not unusual, and thus most universes should have time for complex systems of some type to evolve.” (op cit.) How then can we say that the parameters we observe are that fine tuned if the elements for organic chemistry are going to be generated over a wide range of possible parameter values?

So perhaps the laws and parameters are not fine-tuned for long lived stars. But what about intelligent life? Lineweaver was not the first and is far from alone when he writes “Human intelligence is not a convergent feature of evolution” (Lineweaver, 2009). It would seem then that there is no evidence that the universe is fine tuned for intelligent life. Given the failure so far to recreate life in the laboratory using Miller-Urey style experiments along with any detection of extra terrestrial life it seems it is still an open question about just how friendly towards life in general the universe might be. Because we cannot draw conclusions based upon evidence we do not yet have, we are constrained to point not to the life we cannot observe but rather to the carbon that we can. We know that carbon is essential for life. Are the laws of physics fine tuned then for carbon production? It is to this question we now turn.

The Hoyle Resonance

One of the more frequently cited examples of fine-tuning is that of the Hoyle-Resonance. This is the apparently fortuitous existence of an excited state of the carbon-12 nucleus which permits the triple alpha reaction to proceed at a sufficient rate to account for the amount of carbon we observe in the universe.

Historically, the production of carbon was problematic because the only feasible scenario for carbon creation - the collision of three helium nuclei – was highly improbable. It was shown in 1949 that for the three body collision to have any chance of overcoming the Coulomb barrier and produce appreciable amounts of carbon a temperature in the order of 108 K was required (Salpeter ,1949). However, the temperature at the core of a star is only of the order 107 K.

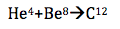

Salpeter in 1952 suggested that two helium nuclei fuse first to produce a beryllium nucleus thus:

It was recognized that the beryllium nucleus is extremely unstable with a half-life of only 3 ´ 10-16 seconds. Now this beryllium nucleus, although short lived, at least now had a chance of fusing with another helium nucleus to produce carbon:

But even this pathway was shown to be highly unlikely to occur at a rate sufficient to account for the amount of carbon actually observed in the universe.

It was Fred Hoyle who first predicted the existence of a resonant energy level at 7.7MeV which would help catalyze the production of carbon (Hoyle, 1953).

Does this observation of the resonance of carbon suggest something deeper about the structure of the universe? One of Hoyle’s more prominent graduate students is Paul Davies. His 2007 book, “The Goldilocks Enigma” provides a popular interpretation of the importance of the Hoyle Resonance (p 157): “The carbon story left a deep impression on Hoyle. He realized that if it weren’t for the coincidence that a nuclear resonance exists at just the right energy, there would be next to no carbon in the universe, and probably no life.”

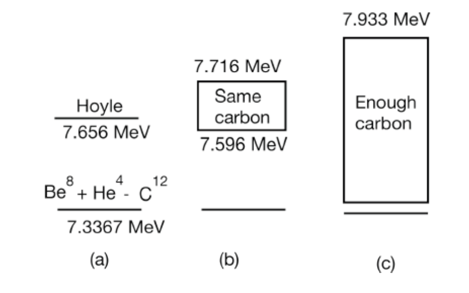

Now everything here turns on the words “just” and “next to”. Just how precise must the energy be for it to be “just” right for resonance to occur? And if the energy was not “just” right, exactly how much carbon is “next to” none? Crucially, there do exist calculations that can quantify these otherwise grey modalities. Livio et al (1989) calculate that although the resonant energy of the carbon nucleus occurs at 7.7 MeV, facilitating the triple alpha reaction there nonetheless exists a range of energies over which the same amount of carbon will be produced and an even greater range of energies over which sufficient carbon would be produced for life. The figure below, taken from Stenger (2010) illustrates this point:

It was Fred Hoyle who first predicted the existence of a resonant energy level at 7.7MeV which would help catalyze the production of carbon (Hoyle, 1953).

Does this observation of the resonance of carbon suggest something deeper about the structure of the universe? One of Hoyle’s more prominent graduate students is Paul Davies. His 2007 book, “The Goldilocks Enigma” provides a popular interpretation of the importance of the Hoyle Resonance (p 157): “The carbon story left a deep impression on Hoyle. He realized that if it weren’t for the coincidence that a nuclear resonance exists at just the right energy, there would be next to no carbon in the universe, and probably no life.”

Now everything here turns on the words “just” and “next to”. Just how precise must the energy be for it to be “just” right for resonance to occur? And if the energy was not “just” right, exactly how much carbon is “next to” none? Crucially, there do exist calculations that can quantify these otherwise grey modalities. Livio et al (1989) calculate that although the resonant energy of the carbon nucleus occurs at 7.7 MeV, facilitating the triple alpha reaction there nonetheless exists a range of energies over which the same amount of carbon will be produced and an even greater range of energies over which sufficient carbon would be produced for life. The figure below, taken from Stenger (2010) illustrates this point:

Figure (a) shows the carbon resonance above the energy of the Beryllium and Helium Nuclei as predicted by Hoyle and subsequently observed by experiment. Figure (b) shows the range of values over which the resonance could occur and still produce the same amount of carbon. Figure (c) shows the even wider range of values over which the resonance could occur and still produce enough carbon for life.

As Stenger points out, “…it is often claimed that this excited state has to be fine-tuned to precisely this value in order for carbon-based life to exist. This is not true.” (Stenger, 2002). It is clear that there is a reasonably broad range over which “enough carbon” is produced for life. But Davies quotes Hoyle himself a number of times that the resonance seems like a “put up job.” (Davies, 2007. p 158). Where Hoyle and Davies see something mysterious, Stenger seems something more mundane. However even Stenger – one of the strongest critics of fine tuning - claims the Hoyle resonance as “the only successful anthropic prediction”(Stenger, 2007).

A paper published in May of 2010 more light on the Hoyle Resonance than even Stenger seems aware of. It seems that only post-facto did Hoyle himself begin to think of his prediction as anthropic. And this was after others claimed it was anthropic for him. Kragh (2010) discusses at length how Hoyle’s prediction of the resonance was clearly not anthropic. It was a consequence of astrophysics with no anthropic considerations whatsoever. Hoyle considered only the observed abundance of carbon in the universe not the observation of life in the universe. And yet the myth has persisted ever since. Her paper is appropriately titled “When is a prediction anthropic? Fred Hoyle and the 7.65MeV carbon resonance” and concludes in part with the statement that “Contrary to the folklore version of the prediction story, Hoyle did not originally connect it with the existence of life. The popular association with the anthropic principle is of later date and has no basis in historical fact, something many authors seem to be unaware of or just do not care about.”

The lesson here for “fine-tuning” seems clear. The degree to which something is claimed as being fine-tuned depends crucially upon the extent to which we understand the phenomena. It seems as though the Hoyle Resonance is not particularly fine-tuned and nor is the anthropic principle needed in order to explain it.

Keeping these lessons in mind we turn briefly then to our final example and perhaps most famous apparent case of fine-tuning – the infamous ‘worst prediction in physics’.

The Cosmological Coincidence

Observations first published in 1998 show that the universe is not only expanding – but that the rate of expansion is accelerating (Reiss et. al 1998). An accelerating expansion is most frequently explained as a positive cosmological constant. A positive cosmological constant is mathematically equivalent to vacuum energy predicted to exist from quantum theory.

Otherwise empty space contains energy as a consequence of Heisenberg’s Uncertainty Principle. This is known as the vacuum energy problem. A region of space devoid of matter contains energy known as “zero-point energy”. Now the theoretical prediction from quantum theory for the zero-point energy in the universe is around 10115 GeV/cm3 but the observed energy density of “dark energy” driving the accelerated expansion of the universe is only 10-5GeV/cm3 (figures from Weinberg, 1989). This 10120 magnitude difference between theory and observation has been called the worst prediction in physics. It is currently unknown what is causing the cancellation – and why the cancellation is not exactly complete. Weinberg suggests (following Linde) that the explanation might be that what we call the universe “may be just one fragment of a much larger universe in which big bangs go off all the time, each one with different values for the fundamental constants” (Weinberg, 1999) and each one (therefore) with a more or less fine-tuned cosmological constant. One mechanism for universe creation can be found in Lee Smolin’s Cosmological Natural Selection.

Cosmological Natural Selection

Smolin takes up the claim that the Anthropic Principle is unscientific because it is unfalsifiable. This idea of falsifiability is an essential one in science. It has been argued (Popper, 1963) that this is the criteria that demaracates science from non science. Smolin believes in the reproduction of universes through black holes. A black hole is able to warp spacetime and in doing so can produce other universes. On average, those universes unable to breed black holes do not reproduce but those who do allow the formation of black holes do go on to produce progeny. This version of the muiltiverse theory is in principle testable according to Smolin. We need only look more closely at black holes (Smolin, 1997).

Smolin’s cosmological natural selection is not the only seriously considered theory of multiple universes and therefore not the only opposition to the anthropic model. The idea of other worlds has its roots in Greek Philosophy and was a serious consideration of the 17th century philosopher Leibnitz to help him explain how this was “the best of all possible worlds”. In more recent times the realist philosopher David Lewis wrote “On the Plurality of Worlds” in order to explain counterfactual statements and he took seriously the physical reality of other logically possible worlds. Max Tegmark has since elevated this idea to that of a physical theory. A different idea, first suggested by Hugh Everett is the realist conception of quantum theory that demands the physical reality of other parallel universes. David Deutsch in “The Fabric of Reality” argues that his discovery of the theory of the universal quantum computer only makes sense if the multiverse actually exists. Published in the Proceedings of the Royal Society, his paper “The Structure of the Multiverse” (Deutsh 2001) lays down the mathematical groundwork and physical theory behind what he considers to be the required reality needed for quantum computation and for the realistic interpretation of quantum observations.

Conclusions

entia non sunt multiplicanda

praeter necessitatem

Entities should not be multiplied

beyond necessity

-William of Ocham (c. AD 1400)

How surprised should we be that we find ourselves in a place that seems remarkably well suited for us? Clearly whatever the conditions are, they must be consistent with the observation that we exist. This weak version of the anthropic principle does little more than state a tautology: we exist because we observe the universe to be such a way that we can exist. Must the universe be this way? There seems little evidence that any stronger versions of the anthropic principle are backed by physical evidence. Apparent examples of fine tuning – like the Hoyle Resonance – seem to be in the eye of the beholder while other examples such as the values of the cosmological constant and the fine structure constant may be little more than observer selection effects. In the case of the latter, the far reaches of the universes may actually have different values while in the case of the former we necessarily observe a universe where the cosmological constant takes on a value consistent with our existence. That it is not identically zero may be evidence for the existence of other worlds. The lineage of sober philosophers and physicists who take or have taken the notion of other universes seriously is long and growing.

On the one hand we have a single universe model - exquisitely fine-tuned for life by an otherwise unexplained fine-tuner or on the other an infinite class (or at least an extraordinarily large set) of actually existing other universes postulated to explain the existence of one observed universe. While the former is apparently parsimonious on universes, the latter is parsimonious on assumptions.

William of Ocham only implores us not to multiply entities beyond necessity. He says nothing about universes.

References

Adams, D. 1998 Speech given at “Digital Biota”. Available online at http://www.biota.org/people/douglasadams/index.html

Barrow J., Tipler F., 1986 “The Anthropic Cosmological Principle” Oxford University Press

Bostrom, Nick. 2002 “Anthropic Bias: Observation Selection Effects in Science and Philosophy” Routledge

Carter, B 1973 "Large Number Coincidences and the Anthropic Principle in Cosmology". IAU Symposium 63: Confrontation of Cosmological Theories with Observational Data. Dordrecht: Reidel. pp. 291–298.

- 2004 The Anthropic Principle in Cosmology: LuTh, Observatoire de Paris-Meudon Contribution to Colloquium “Cosmology: Facts and problems” (Coll`ege de France, June 2004.

Deutsch, D. 2002 “The Structure of the Multiverse” Proceedings of the Royal Society A 8 December 2002 vol. 458 no. 2028 2911-2923 full text available at http://rspa.royalsocietypublishing.org/content/458/2028/2911.abstract

Hawking, S. 1995 - From an interview with Ken Campbell on the television program “Reality on the Rocks”. Video available at YouTube: http://www.youtube.com/watch?v=S3aadgf0GH8

Hoyle, F. et al. 1953 “A State in C12 Predicted From Astronomical Evidence,”

Physical Review Letters 92 (1953): 1097. (Interestingly, this paper – one of the most frequently cited in the literature on anthropics and fine tuning – was almost impossible to find and turns out not to be a complete paper but rather only the abstract of a lecture).

Kragh, H - 2010 “When is a prediction anthropic? Fred Hoyle and the 7.65 MeV carbon resonance”. PhilSci Archive available at http://philsci-archive.pitt.edu/archive/00005332/

Lineweaver C. & Egan C. 2007 “The Cosmic Coincidence as a Temporal Selection Effect Produced by the Age Distribution of Terrestrial Planets in the Universe” Astrophysical Journal, December 10, 2007, Vol 671, 853 available at astro-ph/0703429

Lineweaver, C. 2009 “Paleontological tests: Human-like intelligence is not a convergent feature of evolution” in From Fossils to Astrobiology edt. J. Seckbach & M. Walsh Vol 13 of a series on Cellular Origins and Life in Extreme Habitats and Astrobiology, Springer, pp 353-368

Livio, M., Hollowell D., Weiss, A. & Truran J. 1989 “The anthropic significance of the existence of an excited state of 12C” Nature 340, 281-284

Murphy, M., Webb, J., Flambaum, V. 2003 “Further evidence for a variable fine structure constant from Keck/HIRES QSO absorption spectra”. Monthly Notices of the Royal Astronomical Society (MNRAS) 345, 609 (2003). Available at http://arxiv.org/abs/astro-ph/0306483

Popper, K. 1963 “Conjectures and Refutations: The Growth of Scientific Knowledge” Routledge and Keagan Paul (1963)

Reiss A., et al (1998) “Observational evidence from supernovae for an accelerating universe and a cosmological constant” Astronomical Journal 116: 1009-38

Ross, H. 1998 “The Creator and the Cosmos” Navpress.

Salpeter, E. 1952. “Nuclear reactions in stars without hydrogen” Astrophysical Journal 115 326-328

Smolin, L. 1997 “The life of the Cosmos” Oxford University Press.

Stenger, V 2000 “Natural Explanations for the Anthropic Coincidences”

Philo, Vol. 3 No. 2, Fall-Winter 2000, pp. 50-67 available at http://www.colorado.edu/philosophy/vstenger/anthro.html (Note, this website also contains a link to MonkeyGod – Stenger’s simulation toy).

-2006 “The Comprehensible Cosmos” Prometheus Books.

-2007 “The Anthropic Principle” Chapter in “The Encyclopaedia of Non-belief” Tom Flynn, ed., Prometheus Books 2007, pp. 65-70.

-2010 “Is Carbon production fine-tuned for life?” Skeptical Briefs Vol. 20 No. 1 March, 2010

StengerWeb - 2010 – Victor Stenger’s Homepage about Anthropics accessed http://www.colorado.edu/philosophy/vstenger/anthro.html

Weinberg, S. “The Cosmological Constant Problem” Reviews of Modern Physics 61: 1–23 (1989)

Weinberg, S. “A Designer Universe?” (1999)

Available at http://www.physlink.com/education/essay_weignberg.cfm

As Stenger points out, “…it is often claimed that this excited state has to be fine-tuned to precisely this value in order for carbon-based life to exist. This is not true.” (Stenger, 2002). It is clear that there is a reasonably broad range over which “enough carbon” is produced for life. But Davies quotes Hoyle himself a number of times that the resonance seems like a “put up job.” (Davies, 2007. p 158). Where Hoyle and Davies see something mysterious, Stenger seems something more mundane. However even Stenger – one of the strongest critics of fine tuning - claims the Hoyle resonance as “the only successful anthropic prediction”(Stenger, 2007).

A paper published in May of 2010 more light on the Hoyle Resonance than even Stenger seems aware of. It seems that only post-facto did Hoyle himself begin to think of his prediction as anthropic. And this was after others claimed it was anthropic for him. Kragh (2010) discusses at length how Hoyle’s prediction of the resonance was clearly not anthropic. It was a consequence of astrophysics with no anthropic considerations whatsoever. Hoyle considered only the observed abundance of carbon in the universe not the observation of life in the universe. And yet the myth has persisted ever since. Her paper is appropriately titled “When is a prediction anthropic? Fred Hoyle and the 7.65MeV carbon resonance” and concludes in part with the statement that “Contrary to the folklore version of the prediction story, Hoyle did not originally connect it with the existence of life. The popular association with the anthropic principle is of later date and has no basis in historical fact, something many authors seem to be unaware of or just do not care about.”

The lesson here for “fine-tuning” seems clear. The degree to which something is claimed as being fine-tuned depends crucially upon the extent to which we understand the phenomena. It seems as though the Hoyle Resonance is not particularly fine-tuned and nor is the anthropic principle needed in order to explain it.

Keeping these lessons in mind we turn briefly then to our final example and perhaps most famous apparent case of fine-tuning – the infamous ‘worst prediction in physics’.

The Cosmological Coincidence

Observations first published in 1998 show that the universe is not only expanding – but that the rate of expansion is accelerating (Reiss et. al 1998). An accelerating expansion is most frequently explained as a positive cosmological constant. A positive cosmological constant is mathematically equivalent to vacuum energy predicted to exist from quantum theory.

Otherwise empty space contains energy as a consequence of Heisenberg’s Uncertainty Principle. This is known as the vacuum energy problem. A region of space devoid of matter contains energy known as “zero-point energy”. Now the theoretical prediction from quantum theory for the zero-point energy in the universe is around 10115 GeV/cm3 but the observed energy density of “dark energy” driving the accelerated expansion of the universe is only 10-5GeV/cm3 (figures from Weinberg, 1989). This 10120 magnitude difference between theory and observation has been called the worst prediction in physics. It is currently unknown what is causing the cancellation – and why the cancellation is not exactly complete. Weinberg suggests (following Linde) that the explanation might be that what we call the universe “may be just one fragment of a much larger universe in which big bangs go off all the time, each one with different values for the fundamental constants” (Weinberg, 1999) and each one (therefore) with a more or less fine-tuned cosmological constant. One mechanism for universe creation can be found in Lee Smolin’s Cosmological Natural Selection.

Cosmological Natural Selection

Smolin takes up the claim that the Anthropic Principle is unscientific because it is unfalsifiable. This idea of falsifiability is an essential one in science. It has been argued (Popper, 1963) that this is the criteria that demaracates science from non science. Smolin believes in the reproduction of universes through black holes. A black hole is able to warp spacetime and in doing so can produce other universes. On average, those universes unable to breed black holes do not reproduce but those who do allow the formation of black holes do go on to produce progeny. This version of the muiltiverse theory is in principle testable according to Smolin. We need only look more closely at black holes (Smolin, 1997).

Smolin’s cosmological natural selection is not the only seriously considered theory of multiple universes and therefore not the only opposition to the anthropic model. The idea of other worlds has its roots in Greek Philosophy and was a serious consideration of the 17th century philosopher Leibnitz to help him explain how this was “the best of all possible worlds”. In more recent times the realist philosopher David Lewis wrote “On the Plurality of Worlds” in order to explain counterfactual statements and he took seriously the physical reality of other logically possible worlds. Max Tegmark has since elevated this idea to that of a physical theory. A different idea, first suggested by Hugh Everett is the realist conception of quantum theory that demands the physical reality of other parallel universes. David Deutsch in “The Fabric of Reality” argues that his discovery of the theory of the universal quantum computer only makes sense if the multiverse actually exists. Published in the Proceedings of the Royal Society, his paper “The Structure of the Multiverse” (Deutsh 2001) lays down the mathematical groundwork and physical theory behind what he considers to be the required reality needed for quantum computation and for the realistic interpretation of quantum observations.

Conclusions

entia non sunt multiplicanda

praeter necessitatem

Entities should not be multiplied

beyond necessity

-William of Ocham (c. AD 1400)

How surprised should we be that we find ourselves in a place that seems remarkably well suited for us? Clearly whatever the conditions are, they must be consistent with the observation that we exist. This weak version of the anthropic principle does little more than state a tautology: we exist because we observe the universe to be such a way that we can exist. Must the universe be this way? There seems little evidence that any stronger versions of the anthropic principle are backed by physical evidence. Apparent examples of fine tuning – like the Hoyle Resonance – seem to be in the eye of the beholder while other examples such as the values of the cosmological constant and the fine structure constant may be little more than observer selection effects. In the case of the latter, the far reaches of the universes may actually have different values while in the case of the former we necessarily observe a universe where the cosmological constant takes on a value consistent with our existence. That it is not identically zero may be evidence for the existence of other worlds. The lineage of sober philosophers and physicists who take or have taken the notion of other universes seriously is long and growing.

On the one hand we have a single universe model - exquisitely fine-tuned for life by an otherwise unexplained fine-tuner or on the other an infinite class (or at least an extraordinarily large set) of actually existing other universes postulated to explain the existence of one observed universe. While the former is apparently parsimonious on universes, the latter is parsimonious on assumptions.

William of Ocham only implores us not to multiply entities beyond necessity. He says nothing about universes.

References

Adams, D. 1998 Speech given at “Digital Biota”. Available online at http://www.biota.org/people/douglasadams/index.html

Barrow J., Tipler F., 1986 “The Anthropic Cosmological Principle” Oxford University Press

Bostrom, Nick. 2002 “Anthropic Bias: Observation Selection Effects in Science and Philosophy” Routledge

Carter, B 1973 "Large Number Coincidences and the Anthropic Principle in Cosmology". IAU Symposium 63: Confrontation of Cosmological Theories with Observational Data. Dordrecht: Reidel. pp. 291–298.

- 2004 The Anthropic Principle in Cosmology: LuTh, Observatoire de Paris-Meudon Contribution to Colloquium “Cosmology: Facts and problems” (Coll`ege de France, June 2004.

Deutsch, D. 2002 “The Structure of the Multiverse” Proceedings of the Royal Society A 8 December 2002 vol. 458 no. 2028 2911-2923 full text available at http://rspa.royalsocietypublishing.org/content/458/2028/2911.abstract

Hawking, S. 1995 - From an interview with Ken Campbell on the television program “Reality on the Rocks”. Video available at YouTube: http://www.youtube.com/watch?v=S3aadgf0GH8

Hoyle, F. et al. 1953 “A State in C12 Predicted From Astronomical Evidence,”

Physical Review Letters 92 (1953): 1097. (Interestingly, this paper – one of the most frequently cited in the literature on anthropics and fine tuning – was almost impossible to find and turns out not to be a complete paper but rather only the abstract of a lecture).

Kragh, H - 2010 “When is a prediction anthropic? Fred Hoyle and the 7.65 MeV carbon resonance”. PhilSci Archive available at http://philsci-archive.pitt.edu/archive/00005332/

Lineweaver C. & Egan C. 2007 “The Cosmic Coincidence as a Temporal Selection Effect Produced by the Age Distribution of Terrestrial Planets in the Universe” Astrophysical Journal, December 10, 2007, Vol 671, 853 available at astro-ph/0703429

Lineweaver, C. 2009 “Paleontological tests: Human-like intelligence is not a convergent feature of evolution” in From Fossils to Astrobiology edt. J. Seckbach & M. Walsh Vol 13 of a series on Cellular Origins and Life in Extreme Habitats and Astrobiology, Springer, pp 353-368

Livio, M., Hollowell D., Weiss, A. & Truran J. 1989 “The anthropic significance of the existence of an excited state of 12C” Nature 340, 281-284

Murphy, M., Webb, J., Flambaum, V. 2003 “Further evidence for a variable fine structure constant from Keck/HIRES QSO absorption spectra”. Monthly Notices of the Royal Astronomical Society (MNRAS) 345, 609 (2003). Available at http://arxiv.org/abs/astro-ph/0306483

Popper, K. 1963 “Conjectures and Refutations: The Growth of Scientific Knowledge” Routledge and Keagan Paul (1963)

Reiss A., et al (1998) “Observational evidence from supernovae for an accelerating universe and a cosmological constant” Astronomical Journal 116: 1009-38

Ross, H. 1998 “The Creator and the Cosmos” Navpress.

Salpeter, E. 1952. “Nuclear reactions in stars without hydrogen” Astrophysical Journal 115 326-328

Smolin, L. 1997 “The life of the Cosmos” Oxford University Press.

Stenger, V 2000 “Natural Explanations for the Anthropic Coincidences”

Philo, Vol. 3 No. 2, Fall-Winter 2000, pp. 50-67 available at http://www.colorado.edu/philosophy/vstenger/anthro.html (Note, this website also contains a link to MonkeyGod – Stenger’s simulation toy).

-2006 “The Comprehensible Cosmos” Prometheus Books.

-2007 “The Anthropic Principle” Chapter in “The Encyclopaedia of Non-belief” Tom Flynn, ed., Prometheus Books 2007, pp. 65-70.

-2010 “Is Carbon production fine-tuned for life?” Skeptical Briefs Vol. 20 No. 1 March, 2010

StengerWeb - 2010 – Victor Stenger’s Homepage about Anthropics accessed http://www.colorado.edu/philosophy/vstenger/anthro.html

Weinberg, S. “The Cosmological Constant Problem” Reviews of Modern Physics 61: 1–23 (1989)

Weinberg, S. “A Designer Universe?” (1999)

Available at http://www.physlink.com/education/essay_weignberg.cfm